One small step for government… one giant step for Verifiable Credentials

Plus: We’re thinking about AI agents the wrong way - and it means people won’t use them

Hi everyone, thanks for coming back to Customer Futures.

Each week I unpack the disruptive shifts around Empowerment Tech. AI Agents, digital wallets, Personal AI and the future of the digital customer relationship.

If you haven’t yet signed up, why not subscribe:

Hi folks,

Another mad week for Empowerment Tech.

But let’s start with one of the biggest news stories of the moment. And why it’s going to be so fundamental to the adoption of AI Agents.

Can we really trust the BigTech AI platforms?

Here’s my pull quote of the week, from the CEO of Anthropic. Writing about the latest pressure from the US Government:

“No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons.”

The AI major has been holding its ground after being asked to support military operations by the US ‘Department Of War’.

After they said ‘no’ to Trump, the US Government then blacklisted Anthropic from all government contracts. Suggesting they were a ‘supply chain risk’. And now Anthropic is suing the US Government for a bunch of reasons, including violations of free speech.

I’m sharing these twists and turns in the story not because it’s brilliant and brave stuff from Anthropic (though it is). But because it highlights one of the most important developments happening in AI right now.

That company values and digital trust are fast becoming the fault lines of the AI revolution.

Not the training data, the numbers of tokens, the front-line models, the wizzy videos, or handwringing about the displacement of jobs.

Values and trust.

Yet that’s the hidden problem. We MUST pay close attention to what we mean by ‘digital trust’.

Why?

Because there are two different types of trust, and we mix them up all the time.

Take this simple example: “I trust my bank”

OK. Do they keep your money safe, make it available when you need it, and enable payments? Yes.

But do they ‘accidentally’ forget to tell you about going overdrawn, then charge you a fee? Also yes.

Here’s the difference:

1. EXPERTISE TRUST

Can you do the thing well, reliably, and scalably?

Do you do what you say you do?

Do you have the capabilities and reputation to make it work?

2. MOTIVE TRUST

This is the important, and so often the missing part:

Are your incentives aligned with mine?

Are you consistent in how you act?

Do you weaken safety, security and privacy for your own benefit?

So pay attention to what we mean by ‘trusting’ a service. Expertise vs motives.

Now look at the US govt example again.

Fun fact: Once Anthropic was shown the door by the US Dept of War, OpenAI swept in and sealed a commercial deal in its place.

Despite the US government's demands for the removal of guardrails around safety and governance. And the absence of the principles around autonomous killing and surveillance.

Once someone shows you who they are, believe them.

I think we now have all the evidence we need about values and digital trust for both Anthropic and OpenAI.

And it’s triggered a whole #QuitChatGPT movement. Ironically, it’s also accelerated the work around ‘portable AI memory’, so you can take your AI history with you when you leave.

So place your bets about who is going to win the AI game long term. Now that company values and digital trust are firmly in the spotlight.

It’s never been more important to understand the future of being a digital customer. So welcome back to the Customer Futures newsletter.

In this week’s edition:

One small step for government… one big step for Verifiable Credentials

Stripe just made the Agentic Commerce stack way more practical for merchants

We’re thinking about AI agents the wrong way - and it means people won’t use them

… and much more

Put the kettle on, grab a comfy spot on the sofa, and Let’s Go.

One small step for government… one giant step for Verifiable Credentials

It's been 8 years since I first talked about 'verifiable credentials' (VCs) with the UK government. And boy, their announcement this week stands out.

Why?

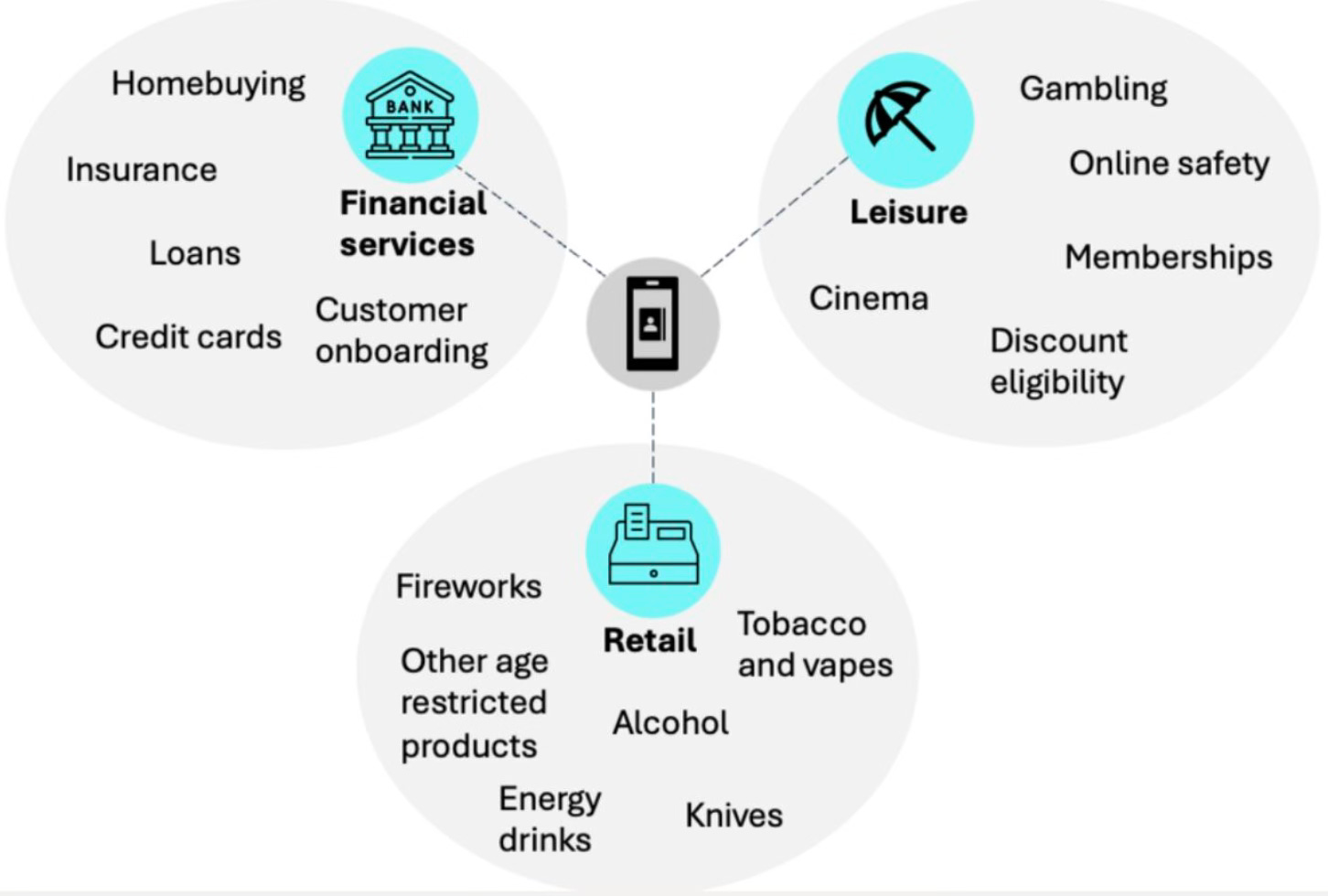

Because the UK Gov has just set out their plans to use verifiable credentials to create a new digital ID for UK citizens.

Not to ‘digitise’ the driving licence or passport (though that’s on the way). But to create a new digital identity credential that citizens can hold on their device, and keep under their control if they want to share it.

It's quite a milestone.

Here are a few things that stand out:

#1: It will be based on open tech standards

This will enable "widespread acceptance across a range of businesses and organisations," and the "potential for interoperability."

Fantastic news. It means these new government-issued verifiable credentials will work alongside other products and services where digital ID will be useful. Public and private sector use cases, with digital identity beyond government - quite right.

#2: Government will be offering ‘digital verification services’ too

Alongside this new golden identity credential, they’re planning to offer ways for organisations to check that a digital ID credential is legit.

Helpful, but it’s going to trigger a bunch of issues for the existing private sector identity providers. Who now have the government competing with their products and services.

This move has ruffled more than a few feathers.

Because for the last few years, the UK Govt position has been: “Government shouldn’t be providing digital ID services… we’ll leave that to the private sector… instead, we’ll just create the ‘rules of the road’ and an open framework, so that Government can certify identity providers… and let a healthy marketplace flourish…”

So this twist around verification services is a bit weird. Especially when over 45 of the UK’s identity providers have already been certified against the government framework…

Is the referee now also playing on the pitch?

#3: The verifiable credentials will include "support for privacy-enhancing technologies like 'selective disclosure'..."

This is great news. Because citizens and customers shouldn’t need to share all their details when asked for them.

In fact, in most cases, you DON'T need to know who I am... just that I'm old enough, or qualified/entitled to do something. Proof of 'Over18' is the obvious example, and is the use case they highlight. No more need to share date of birth! Huzzah.

#4: They are describing VCs the right way

But overall, I'm mostly pleased - and proud - that much of the wording in the UK Government announcement this week reflects nearly exactly what we said about VCs 8 years ago.

Using precisely the same language to describe verifiable credentials. As the safest, most private and scalable way to unlock digital ID at national scale.

Where the individual is at the centre, and in control.

Amazingly, the UK Government is now using phrases like

"Privacy by design and default"

"Holder Services"

"Verifiable credentials can be confirmed as genuine [...] and have not been faked, tampered with or revoked.

Yes, there's lots to dig into. And it’s only the start of the public consultation. But the direction of travel feels right.

I'm proud to have been there at the start of this movement towards verifiable credentials, digital wallets and portable citizen data. At the start of the shift towards customer control over their data.

And at the start of Empowerment Tech.

It’s one small step for government… and one big step for Verifiable Credentials.

The next 8 years are going to be wild.

Stripe just made the Agentic Commerce stack way more practical for merchants

This is a quiet, but important development from Stripe.

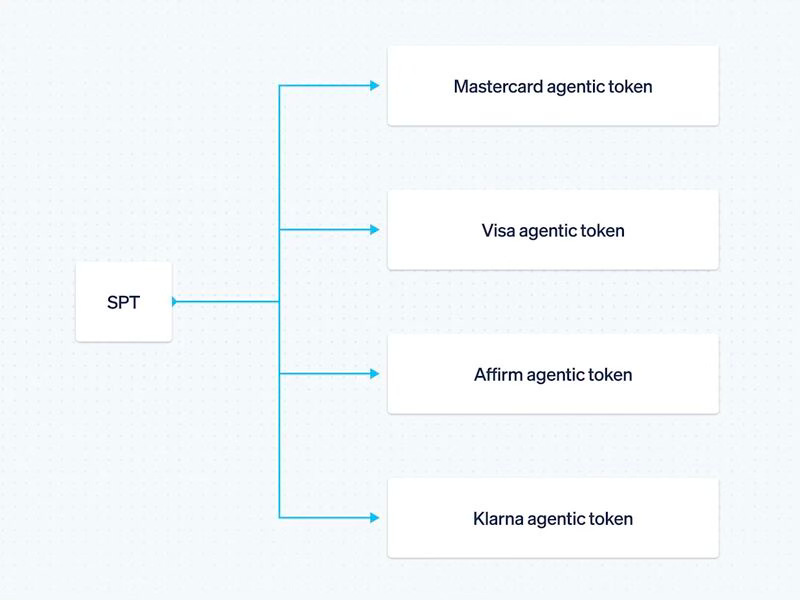

They just upgraded their 'Shared Payment Tokens’ (SPTs) features to accept all kinds of payments. Including those from AI Agents.

They are expanding SPT support to include the agentic payment tools from the payments networks like Mastercard's Agent Pay and Visa's Intelligent Commerce, plus BNPL methods like Affirm and Klarna.

Why should you care?

Look closely at what's going on below the waterline, and how SPTs enable agent payments at scale.

It means that sellers can keep integrating SPTs, while behind the scenes, Stripe provisions the correct underlying payment token. AI agents can now initiate payments with customer permission without exposing card credentials.

Stripe says that businesses like Etsy and URBN are already using SPTs, and that existing Stripe sellers can automatically support these added methods for agentic transactions.

Yes, you might have seen that ChatGPT is ‘pulling back from agentic commerce’ (at least that's the current story of the day). Meaning consumers will still need to head to each partner app to check out (i.e. you bounce out from ChatGPT to Booking. com to complete the transaction).

But here's what you need to pay attention to.

Once tokenised, scoped permissions and confirmation flows are standardised, a customer's AI agent will soon be able to make payments across many more merchants without every provider inventing its own “AI checkout”.

What's that you say? What about governance, and when (not if) things go wrong?

Glad you asked.

There are still huge questions about how we

Define, capture and record customer intent

Step up customer approvals and authentication

Understand and manage refunds

Handle liability when the buyer is software, not the customer themselves

We can see some of the early answers, but there's so much more to do.

And right now, it's hard to keep up. To stay on top of all the tech, the announcements, the FOMO, the hype, the agentic claw-this, and AI claw-that.

It's why I just set up a new advisory firm, Trusted Agents.

To make sense of the noise. To keep asking the questions about scale and adoption. And to help businesses work out their market response for when customers get their own Personal Agents and automation tools.

You can sign up to our weekly Situation Room newsletter here.

Because Agentic Commerce is coming. Stripe’s founders, the respected Collinson brothers, agree. And they don’t make market moves willy-nilly.

So are you ready?

We’re thinking about Personal AI agents the wrong way - and it means people won’t use them

Sarah Gold is one of the OGs on designing for digital trust.

She’s the founder and CEO of Projects By If, a leading design agency that works with some of the biggest brands on digital trust, UX design patterns for transparency and consent, and much more.

This week, she published a banger of a post about why we’re thinking about agentic trust the wrong way.

Or rather, why we’re thinking about it at the wrong level.

Here’s a snippet, bold mine:

“Anyone building with agentic AI right now is making a bet about what people will trust these tools to do on their behalf.

“What keeps coming up in our work is that people don’t think about privacy at the level of data. They think about it in terms of boundaries.

“Finance shouldn’t bleed into health. Work shouldn’t mix with family. These are felt distinctions about how different parts of a life should stay separate.

“The entire infrastructure we’ve built... consent checkboxes, cookie banners, privacy dashboards - they all operate at the wrong level.

“It asks people to make decisions about data flows they can’t see, in language they don’t use, for risks they can’t easily picture.

“No wonder there’s a gap between what people say and what they do.

“This is the problem agentic design inherits.

“An agent that acts across your whole life - bookings, finances, health, relationships - will cross those boundaries constantly, invisibly, at speed.

Then she lands the punchline:

“If you’re not designing around the boundaries people actually hold, you’re not building something trustworthy. You’re building something people will eventually reject.

“The strategy question isn’t just what agents can do. It’s what people will let them do and keep letting them do.”

For a while now, I’ve been writing about the idea of ‘OBO’ (on behalf of). Where other people, organisations and things act for you, with ‘delegated authority’.

In the age of Personal Agents, OBO is going to become critical. It will be as important and as far-reaching as KYC and AML, as common frameworks we use to build trust.

I see the opportunity to develop common ‘OBO frameworks’ to understand:

Which AI agent is which

How agents can prove it

How we can ‘bind’ agents to specific legal entities and people

How we can make portable each agent’s authority, permissions and scope

But as Sarah so brilliantly points out, privacy - and people’s perceptions of it - must be designed into how we delegate to AI Agents too.

Because right now we’re falling into the same trap. Of designing AI agents around data and transactions. Not around people and relationships, and how we each think about personal boundaries.

In other words, we need to take the time, and with the right groups of experts and designers, to develop trustworthy user interfaces and user experiences around delegation and OBO too.

OTHER THINGS

There are far too many interesting and important Customer Futures things to include this week.

So here are some more links to chew on:

News: Utah passes its landmark ‘SEDI’ (State Endorsed IDentity) regulation READ

Article: Anthropic Could Win The Consumer Market READ

Post: Why Trust Can’t Be Hard-Coded Into Agentic Systems READ

Idea: We’re at a turning point for AI portability READ

Post: Is OpenAI the Yahoo of AI? READ

And that’s a wrap. Stay tuned for more Customer Futures soon, both here and over at LinkedIn.

And if you’re not yet signed up, why not subscribe: